But perhaps the standards for maturity for a digient shouldn’t be as high as they are for a human; maybe Marco is as mature as he needs to be to make this decision. Marco seems entirely comfortable thinking of himself as a digient rather than a human. It’s possible he doesn’t fully appreciate the consequences of what he’s suggesting, but Derek can’t shake the feeling that Marco in fact understands his own nature better than Derek does. Marco and Polo aren’t human, and maybe thinking of them as if they were is a mistake, forcing them to conform to his expectations instead of letting them be themselves. Is it more respectful to treat him like human being, or accept that he isn’t one?

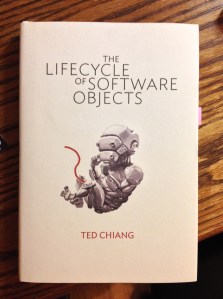

From The Lifecycle of Software Objects.

This book feels just as amazing as when I first read it about three years ago. That’s almost undoubtedly because we’re still wrestling with how to achieve and think about human-like artificial intelligence, and author Ted Chiang has done a fantastic job grappling with the latter. In a nutshell, the story he tells is about the creation and growth of AI beings called digients, but there are so many great layers wrapped around that basic core, including questions of identity, maturity, sexuality, responsibility—you know, all the deep stuff we’ve contemplated about ourselves for ages. Ted Chiang really knows how to impressively weave cutting-edge and age-old ideas together in this enthralling work of fiction so they come across as intriguing and intuitive. I only wish that the voice of the book were a bit more “show” than “tell”, but this approach makes for a fast-moving, epic-feeling novella (hardcover version is only 150 pages).

The passage above comes at a critical turning point in the book where the humans and digients are grappling with an attractive but disconcerting proposition that will permanently and fundamentally change the nature of some digients and their interactions with people.